Kimi K2.5 is a MoE architecture foundation model with exceptional code and Agent capabilities. We use Claude Code, VS Code & Cline/RooCode as examples to illustrate how to use the Kimi K2.5 series models.Documentation Index

Fetch the complete documentation index at: https://platform.kimi.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Usage Notes

When using large models for code generation, due to the randomness and complexity of the model, multiple attempts may be required to generate code that meets expectations. Programming tools will automatically perform multiple rounds of retries and calls, which may lead to rapid token usage growth. To better control costs and improve the user experience, we recommend you pay attention to the following points:- Budget Control

- Set Daily Spending Limit: Before use, please go to Kimi Open Platform Project Settings to configure the “Project Daily Spending Budget”. Once the budget limit is reached, the system will automatically reject all API requests under this project (Note: Due to billing delays, the limit may take about 10 minutes to take effect). For setup instructions, please see Organization Management Best Practices

- Balance Alert Reminder: It is recommended to enable the account balance reminder function. When the account balance falls below the preset amount (default ¥20), the system will notify you via SMS to recharge in a timely manner.

- Usage Recommendations

- Continuous Monitoring: It is recommended to keep monitoring while the programming software is running, and handle abnormal situations promptly to avoid unnecessary resource consumption due to infinite loops or excessive retries.

- Model Selection: If response speed is not a high priority, you can choose to use the

kimi-k2.5model.

K2 Vendor Verifier

Kimi models have always focused on agentic loops, and the reliability of tool calls is crucial. To this end, we launched K2 Vendor Verifier (K2VV) to evaluate the quality of Kimi API tool calls from different vendors, helping you intuitively compare the accuracy differences among various vendors. Latest Update: K2VV has been expanded to 12 vendors, and more test data has been open-sourced. Welcome to provide feedback on the test metrics you care about here.Obtaining API Key

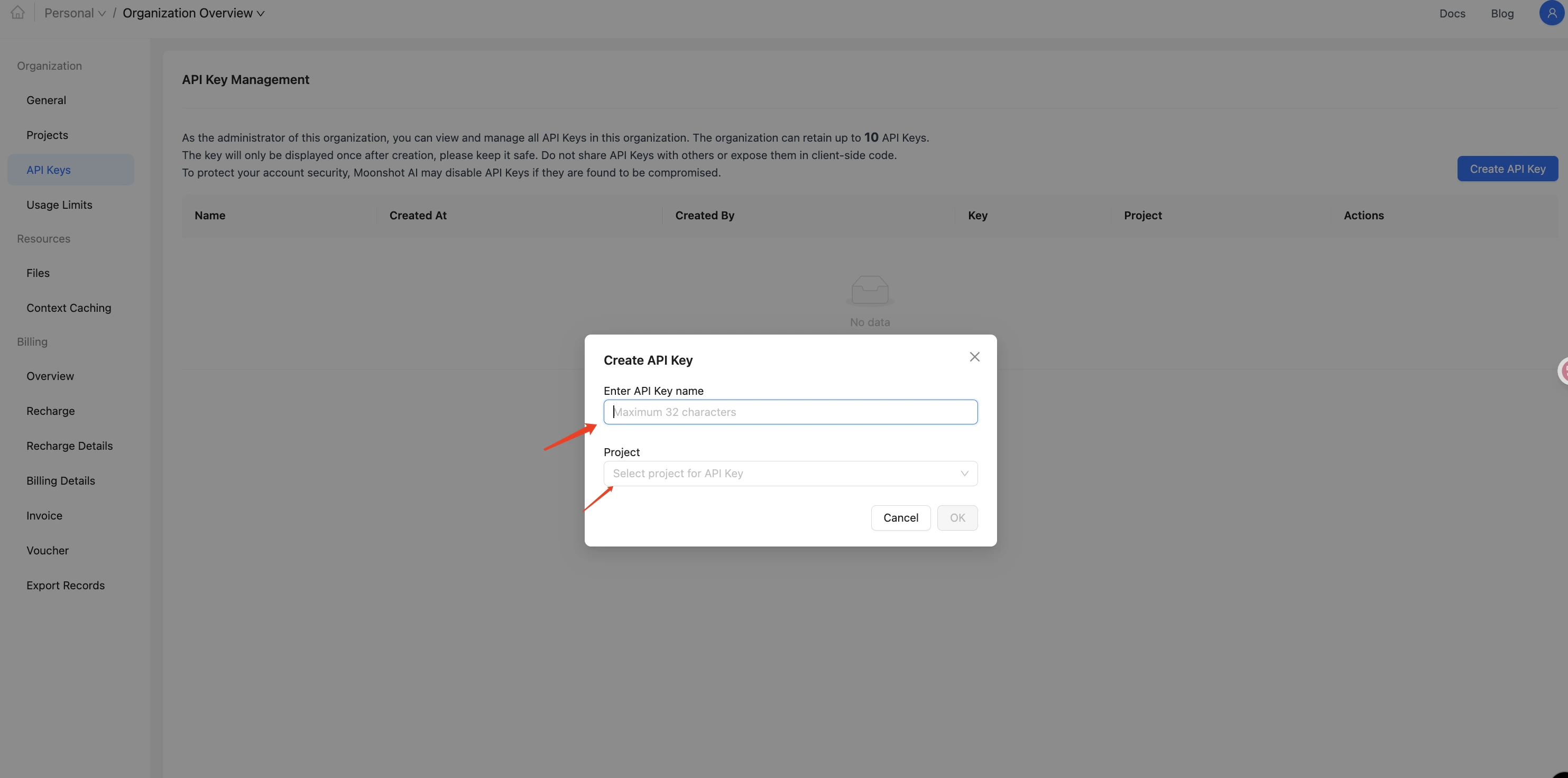

- Visit the Open Platform at https://platform.kimi.ai/console/api-keys to create and obtain an API Key, selecting the default project.

Using kimi k2.5 model in Claude Code

Install Claude Code

If you have already installed Claude Code, you can skip this stepMacOS and Linux

Windows

Configure Environment Variables

After completing the installation of Claude Code, please set the environment variables as follows to use thekimi-k2.5 model and start Claude.

MacOS and Linux

Windows

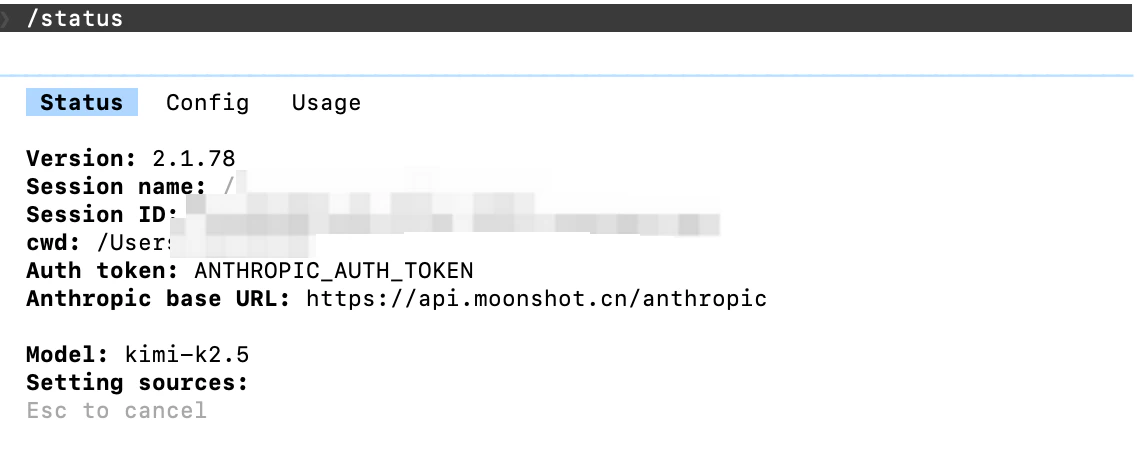

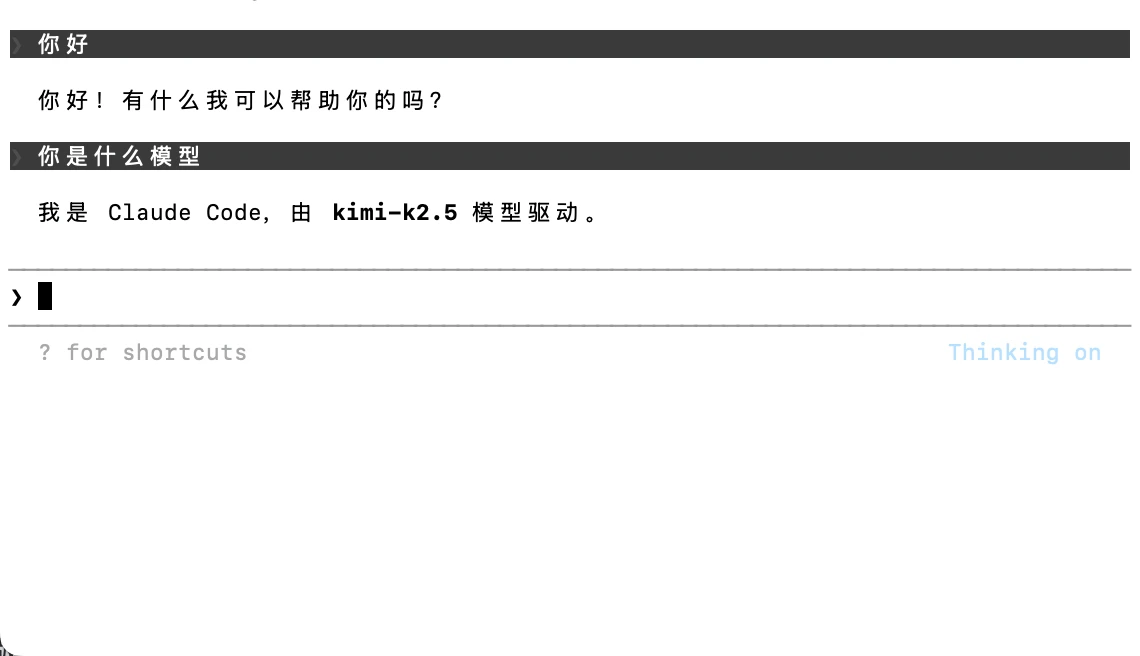

Verify Environment Variables

Enter/status in Claude Code to check the model status:

- How to experience the thinking capabilities of

kimi-k2.5in Claude Code- After configuring the model, click the

Tabbutton to switch after entering the Claude Code page. A “Thinking on” indicator will appear when the switch is successful.

- After configuring the model, click the

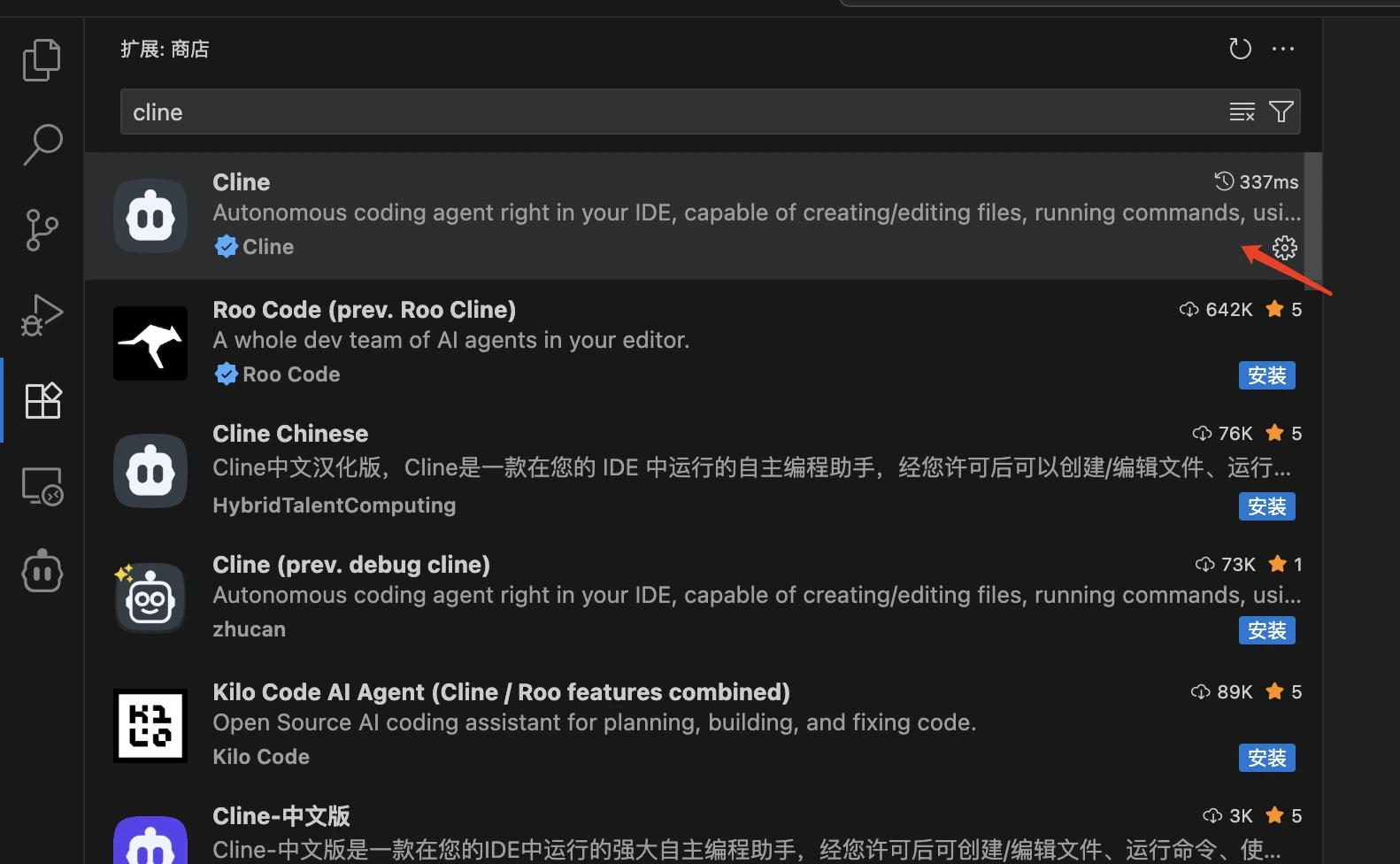

Using kimi k2.5 model in Cline

Install Cline

- Open VS Code

- Click the Extensions icon in the left activity bar (or use the shortcut

Ctrl+Shift+X/Cmd+Shift+X) - Enter

clinein the search box - Find the

Clineextension (usually published by Cline Team) - Click the

Installbutton to install it - After installation, you may need to restart VS Code

Verify Installation

After installation, you can:- See the Cline icon in the left activity bar of VS Code

- Or search for “Cline” related commands through the command palette (

Ctrl+Shift+P/Cmd+Shift+P) to verify the installation was successful

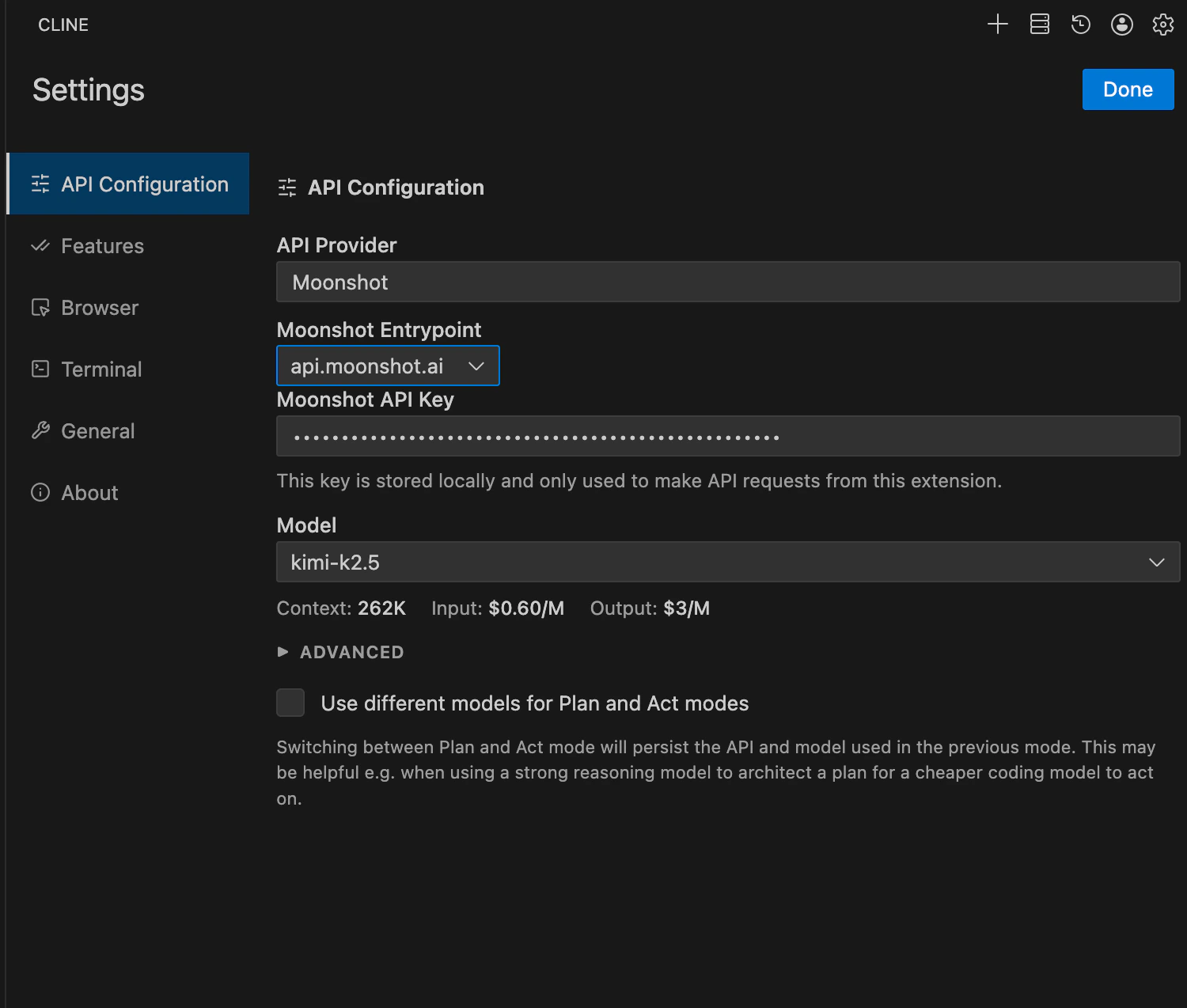

Official Recommendation: Configure Moonshot Provider to use Kimi K2.5 model

- Select ‘Moonshot’ as the API Provider

- Select ‘api.moonshot.ai’ as the Moonshot Entrypoint

- Configure the Moonshot API Key with the Key obtained from the Kimi Open Platform

- Select ‘kimi-k2.5’ as the Model

- Check ‘Disable browser tool usage’ under Browser

- Click ‘Done’ to save the configuration

Experience the effects of Kimi K2.5 model in cline

- We asked the Kimi K2.5 model to write a Snake game

- Game effects

Using Kimi K2.5 model in RooCode

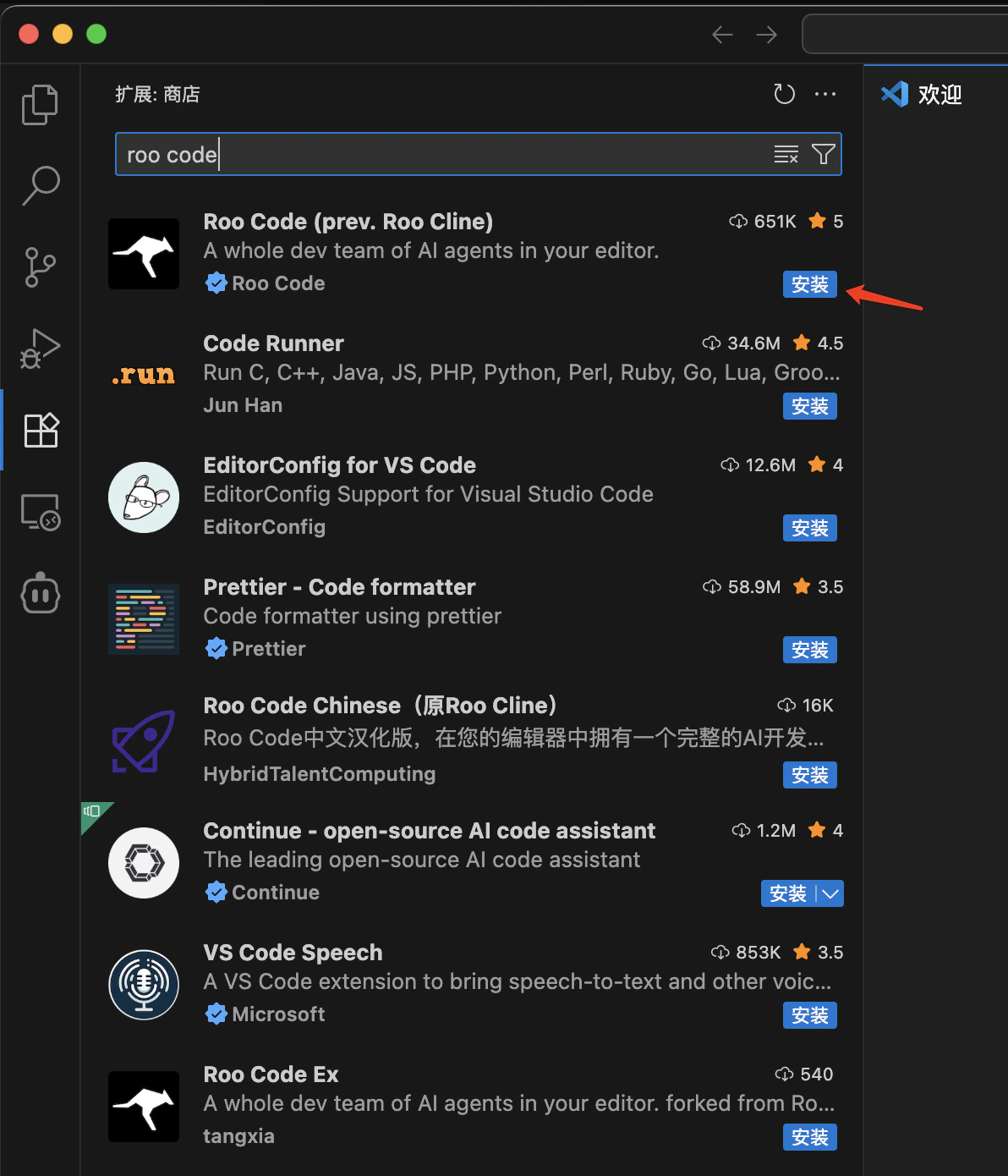

Install RooCode

- Open VS Code

- Click the Extensions icon in the left activity bar (or use the shortcut

Ctrl+Shift+X/Cmd+Shift+X) - Enter

roo codein the search box - Find the

Roo Codeextension (usually published by RooCode Team) - Click the “Install” button to install it

- After installation, you may need to restart VS Code

Verify Installation

After installation, you can:- See the RooCode icon in the left activity bar of VS Code

- Or search for “RooCode” related commands through the command palette (

Ctrl+Shift+P/Cmd+Shift+P) to verify the installation was successful

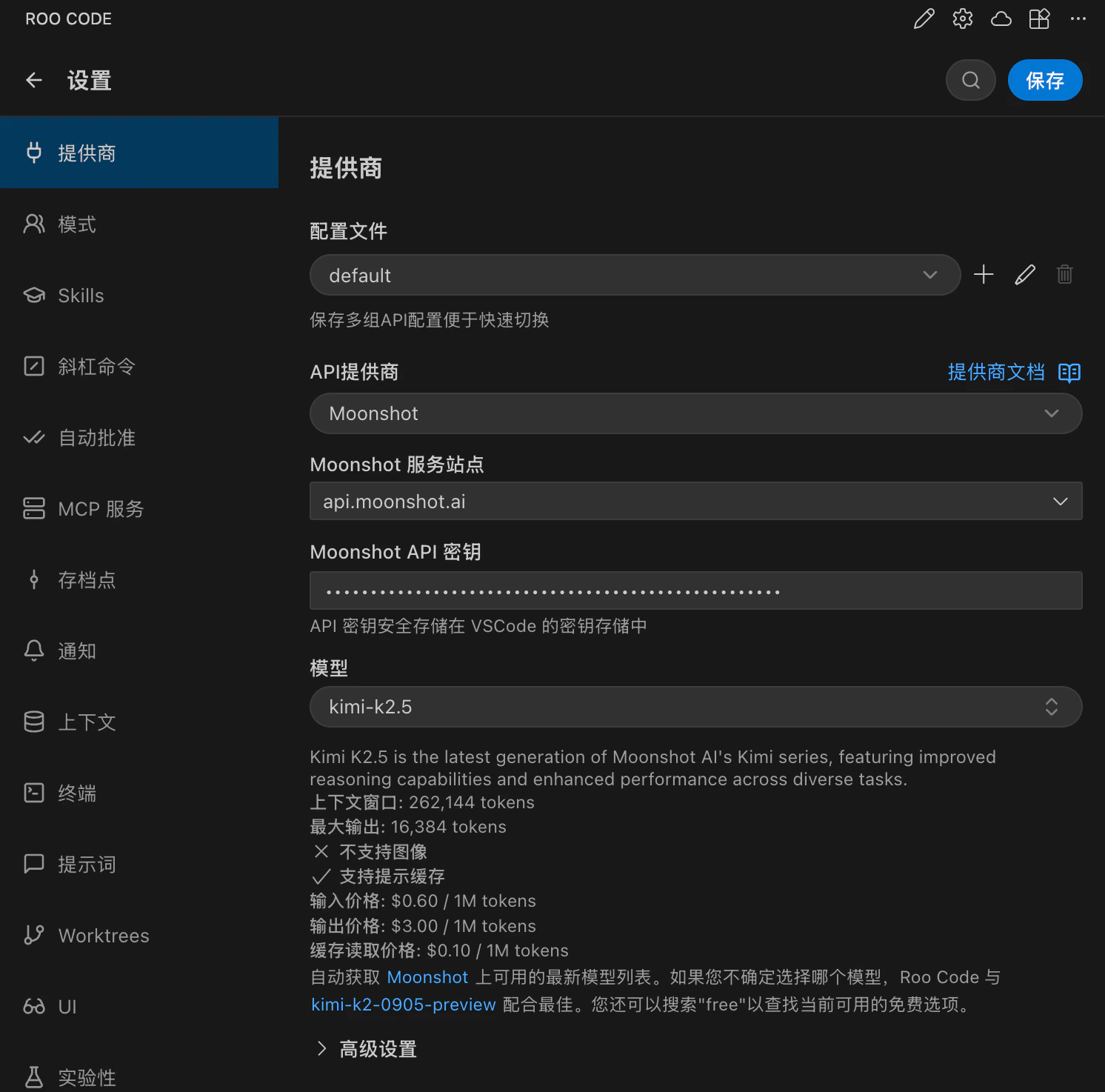

Official Recommendation: Configure Moonshot Provider to use Kimi K2.5 model

- Select ‘Moonshot’ as the API Provider

- Select ‘api.moonshot.ai’ as the Moonshot Entrypoint

- Configure the Moonshot API Key with the Key obtained from the Kimi Open Platform

- Select ‘kimi-k2.5’ as the Model

- Check ‘Disable browser tool usage’ under Browser

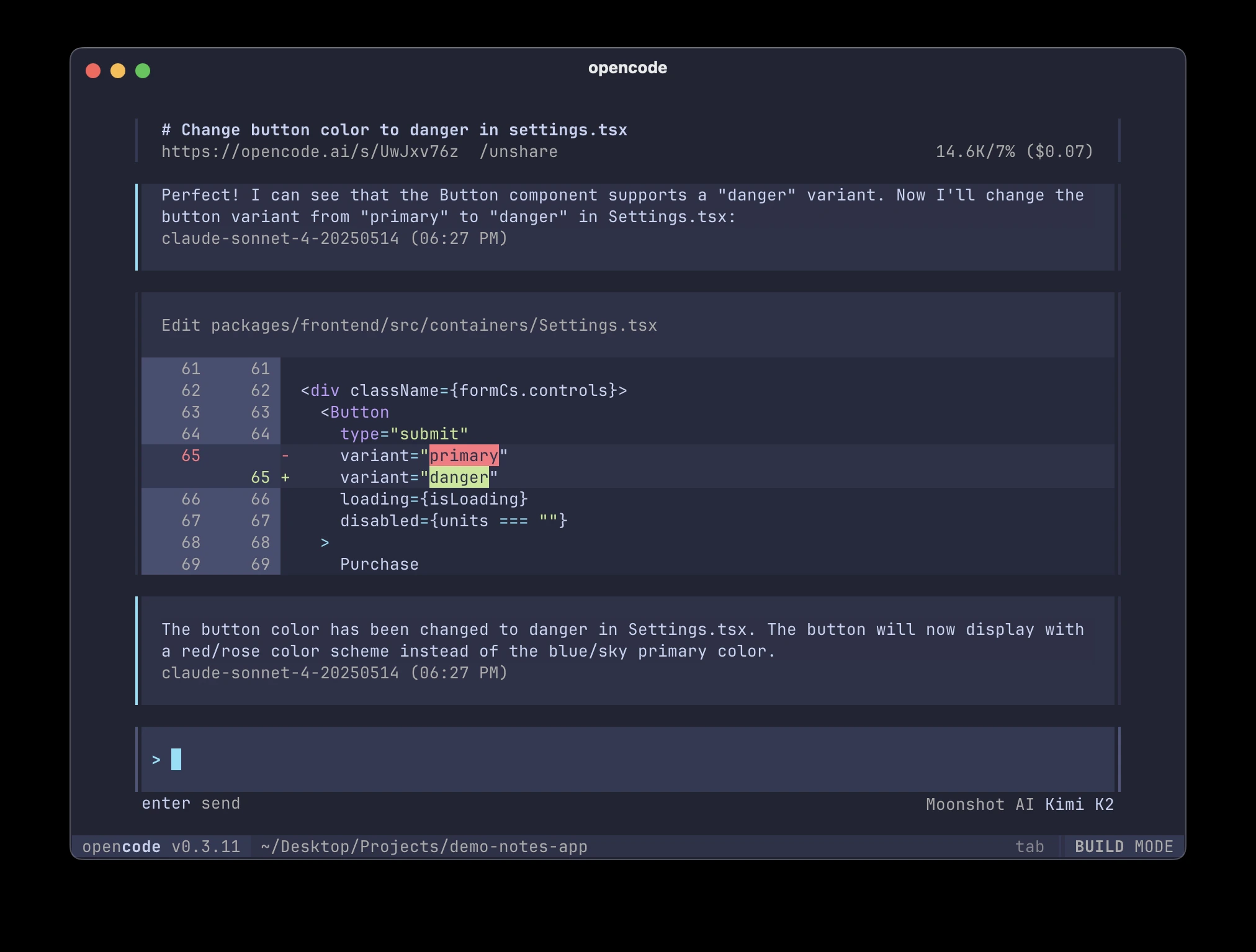

Using kimi k2.5 Model in OpenCode

Install OpenCode

The easiest way to install OpenCode is through the install script.Configure API key

- Run

opencode auth loginand select Moonshot AI. - Enter your Moonshot AI API key.

- Run

opencodeto launch OpenCode.Use the/modelscommand to select the Kimi K2.5 model.

Direct API Calls to Kimi K2.5 model

- python

- curl

- node.js